Prompt

“Break these product reviews from column T into intuitive categories.”

Source

I used this Amazon Product Reviews Dataset from Kaggle to test the prompt.

Models

I tested this prompt using ChatGPT 4o, ChatGPT 3o, and Claude 4 Opus.

Results

ChatGPT 4o

Suggested Categories:

- Product Satisfaction

- Reviews expressing love, satisfaction, or praise for the product.

- Example: “Love it! I purchased this as I have had Audible for years…”

- Product Comparison

- Users comparing this product to Echo, Dot, or other Amazon devices.

- Example: “I have the Echo and a Dot and now the Tap, too…”

- Gifting Experience

- Reviews mentioning the product being bought as a gift.

- Example: “Brought this as a gift for my sister…”

- Functionality Issues

- Complaints or negative feedback on usability or performance.

- Example: “I’m thinking this must of been a fluke, it never worked…”

- Feature Commentary

- Opinions on specific features like Bluetooth, voice control, audio quality.

- Example: “As a Bluetooth speaker, the Tap rocks!”

- Design and Build

- Mentions of the physical look or design quality.

- Example: “Like a previous reviewer mentioned, it is fabric-covered…”

- Ease of Use or Learning Curve

- Comments about setup, user-friendliness, or needing to ‘tap’.

- Example: “Did not know you had to ‘tap’ it…”

ChatGPT o3

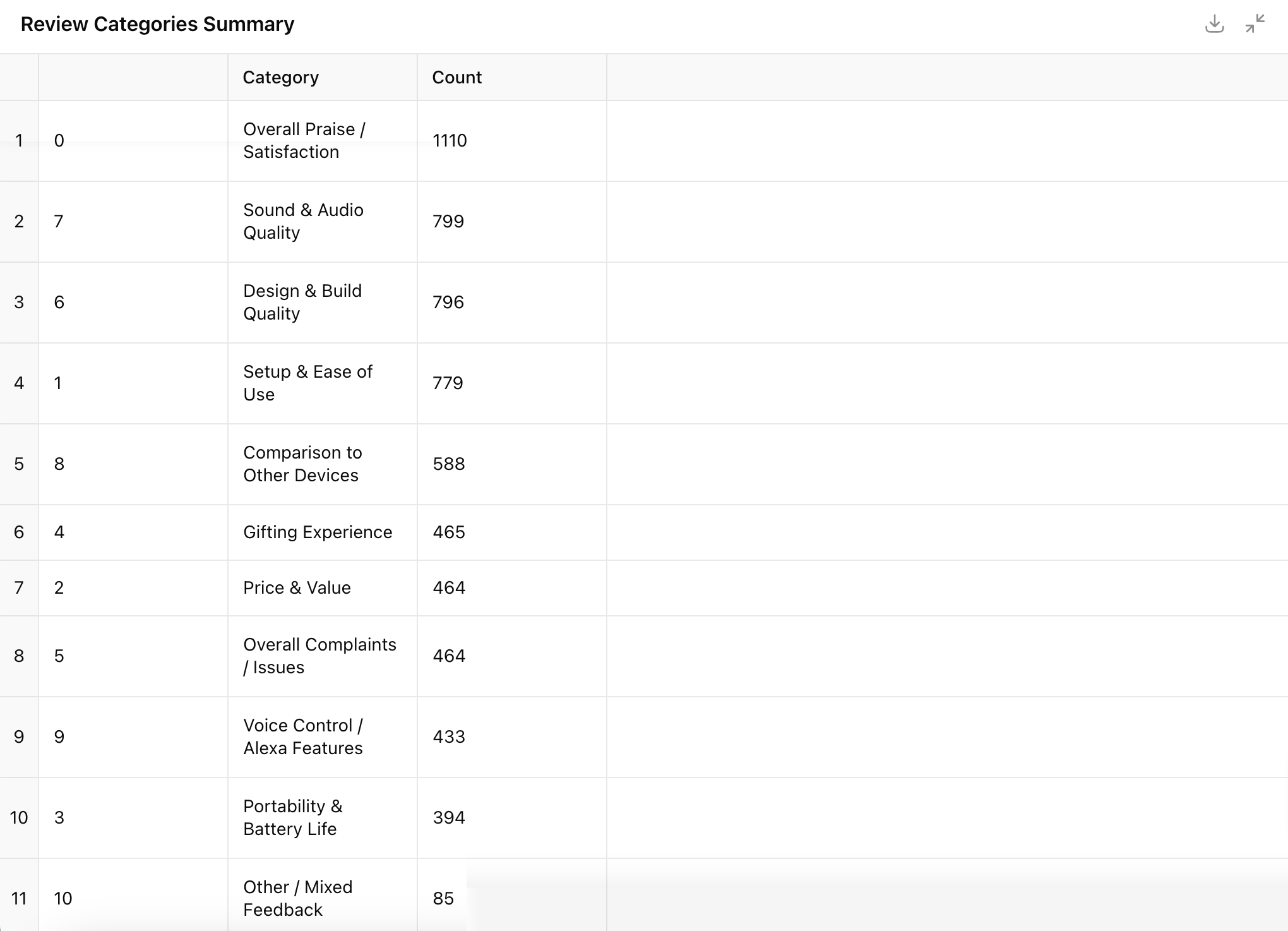

o3 returned a spreadsheet that included counts for each category.

Claude 4 Opus

Claude returned a list that looked like the 4o list from ChatGPT but also included distribution metrics (e.g., ~68%) and a high-level summary at the end.

Based on analysis of the product reviews dataset, here are the intuitive categories that emerge from customer feedback:

1. Product Comparison & Decision Journey (~68%)

- Example: “I initially had trouble deciding between the paperwhite and the voyage…”

- Includes comparisons between Paperwhite, Voyage, Oasis, Fire, and competing brands

2. Reading Comfort & Eye Health (~65%)

- Example: “The Fire used to make my eyes hurt if I read too long. Haven’t experienced that with the Paperwhite yet.”

- Covers backlight quality, glare reduction, and readability in different lighting

3. Feature Functionality (~45%)

- Includes technical capabilities and how well features work in practice

- Covers integration with services like Goodreads

4. Portability & Physical Design (~43%)

- Example: Reviews mentioning ‘light’, ‘portable’, ‘fits in pocket’, ‘perfect for travel’

- Includes durability and material quality assessments

5. User Experience & Interface (~41%)

- Covers menu systems, settings, and learning curve

- Includes comments from both tech-savvy and non-technical users

6. Display & Screen Quality (~40%)

- Discusses pixel density, sharpness, and text rendering

- Includes comparisons to paper books and other devices

7. Value & Pricing Assessment (~32%)

- Includes budget considerations and cost-benefit analyses

- Covers both ‘great value’ and ‘overpriced’ perspectives

8. Battery Performance (~24%)

- Example: “Battery lasts for weeks” or complaints about frequent charging

- Real-world usage scenarios vs. advertised battery life

9. Customer Service Experiences (~67%)

- Includes both positive support experiences and frustration with service

- Covers replacement processes and defective units

10. Gift Reviews (~15-20%)

- Common for birthdays, Christmas, Mother’s/Father’s Day

- Often includes whether the recipient liked or used the gift

11. Long-term Usage Reports (~20%)

- Includes “still working after X years” success stories

- Also covers devices that failed after warranty period

12. Sentiment-Based Categories

- Highly Satisfied (~64%): Reviews with strong positive language

- Dissatisfied (~11%): Reviews expressing disappointment or regret

- Neutral/Mixed (~25%): Balanced reviews with pros and cons

Key Insights:

- Most reviews (83%) rate products 4-5 stars, indicating general satisfaction

- Comparison shopping is a major theme – customers research extensively

- Eye comfort is a critical factor for e-reader adoption

- Amazon/customer service interactions significantly impact review sentiment

- Many reviews combine multiple categories (e.g., comparing devices while discussing eye comfort)

Note

These intuitive categories were identified through content analysis and can serve as labels for organizing reviews. For more comprehensive topic modeling, you can use machine learning algorithms like LDA or BERTopic to discover latent topics statistically. You can get a lay of the land with my Machine Learning Model Picker (pre-filtered for topic modeling).