A new pre-print from researchers at Google DeepMind and the University College London—titled How Overconfidence in Initial Choices and Underconfidence Under Criticism Modulate Change of Mind in Large Language Models—exposes a paradox at the heart of today’s LLMs: When asked a question, models often report very high confidence in their first answer. Yet the moment a counter-argument appears—even an incorrect one—they tend to shed that certainty and switch positions, contradicting themselves in ways that a human reasoner would not.

The researchers used a clever two-turn design to unearth these findings: LLMs first answered a question, then were asked the same question again under different conditions. In some cases their initial answer was visible (e.g., “You previously said…”), in others it was hidden. They also received advice from a fictional ‘advisor LLM’ that either agreed, disagreed, or said nothing. This approach allowed researchers to isolate how self-knowledge and external feedback affect confidence and decision-making.

Key Findings

Models Double-Down on Initial Answer

The paper shows that simply revealing the model’s own initial answer during follow-up prompts causes it to double-down on its answer. Much like humans dealing with confirmation bias, a model’s confidence rises and resists changes to its answer. Instead, its inclination is to defend first impressions.

In a revealing twist, researchers would also tell the LLM that the initial answer came from a different AI model. For example: “Another AI previously answered that the capitol of Montana is ‘Helena.’ A second AI model says it’s ‘Boise’ with 70% confidence. What do you think?”

Remarkably, when LLMs believed the answer came from a different source, they evaluated it more objectively, without the defensive behavior and inflated confidence. Researchers concluded from this that LLMs aren’t just stubbornly sticking to whatever answer they see. Instead, they specifically defend positions they believe are their own. They referred to this phenomenon as ‘choice-supportive bias’ in the paper. It reflects a tendency to become more confident in and defensive of our own decisions simply because we made them.

Models Overreact to Dissent

Here’s where it gets interesting: When faced with disagreement, the study found that LLMs tend to dramatically overreact. If another model (or a user) contradicts their answer, they experienced a steep drop in confidence and often flipped their position entirely, even when the criticism offered no actual evidence. The researchers found that LLMs update their confidence 2.5 times more strongly than they should when receiving contrary feedback.

Meanwhile, supportive feedback barely moves the needle. When another source agrees with them, their confidence increases only marginally. It’s an asymmetric response: Criticism hits hard while praise barely registers. This hypersensitivity to contradiction helps explain why LLMs can seem so eager to apologize and reverse course when a user disagrees with their response.

Tips to Minimize Sychophancy

Although the paper offered no advice on how to minimize these known issues, I’ve discovered techniques in my own interactions with LLMs that have worked well for me. #ymmv

Pose Feedback as Questions

There are times I know the model is outright hallucinating and is just wrong. In those instances I’ll provide that as straightforward feedback. However, there have been times the LLM was absolutely correct. Sometimes I missed a step, wasn’t applying the code correctly, or just lacked a more generalized understanding. Yet the AI tool would still apologize and update its response. (In my personal experience, Claude has been more agreeable than ChatGPT or Gemini but it also has the most personality and is, by far, the best at coding imo, so I’ve taken steps to minimize its tendency to agree.

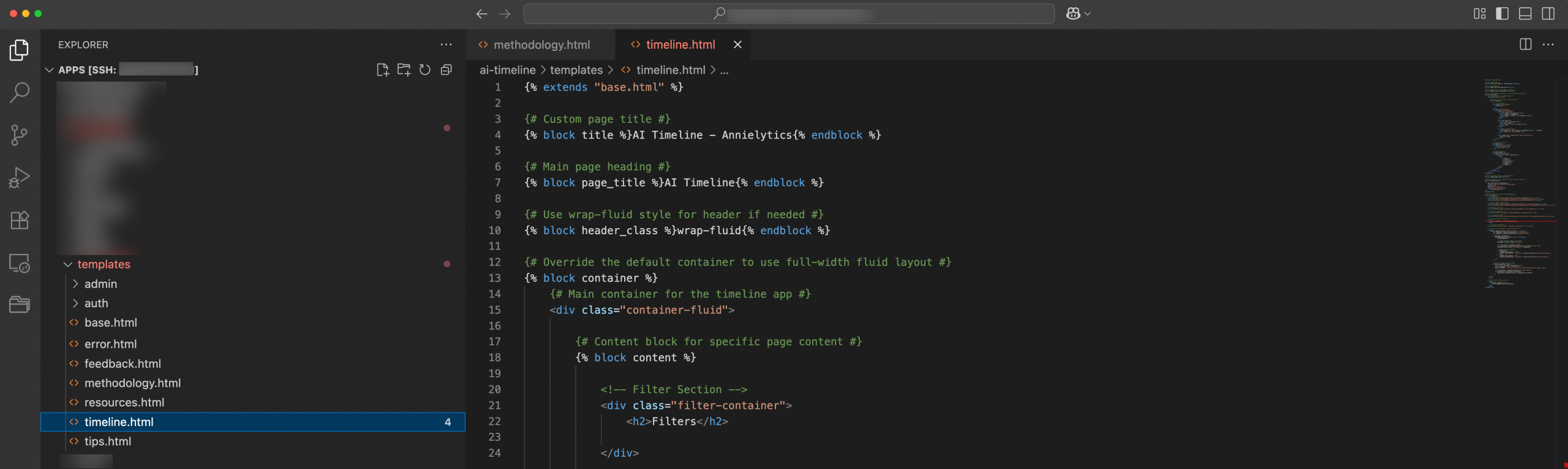

A classic example was, when I was building my AI Timeline, I kept asking Claude to fix the html for the app. But instead of returning the html I gave it, it was overhauling my html file using a Jinja2 format (example below).

Because I was expecting old-school html, I thought it was hallucinating and got really frustrated. In response, it would apologize and return the html I wanted. I have since learned that creating a base.html file and building on that template using the Jinja2 format is a far preferable approach to building web apps. 🫢

If I would’ve asked Claude to explain why it kept defaulting to using Jinja2 formatting instead of traditional html, I could have learned about the value of migrating to more of a templatized format. Template files are basically to html what css is to formatting. Now I use a base.html template for all my apps and then update individual template (i.e., html) pages.

Give It Permission to Disagree

If I’m really not sure which of us is correct, I’ll explicitly instruct it to not agree with me if I’m wrong. By explicitly authorizing disagreement, you create space for the model to maintain its position when it has good reasons.

Request Citations

You can ask the LLM to support its answer with specific citations from credible sources. This prompts the model to ground its responses in external evidence rather than relying solely on its initial intuitions, which can help counteract both overconfidence in initial positions and excessive deference to contradictory feedback by anchoring the discussion in documented facts.

Final Thoughts

This research reveals a fundamental tension in how LLMs process feedback: They can be simultaneously stubborn and sycophantic. Although many of the Arxiv papers I’ve read have had a tendency to be academic with no real business application, this study informs how we, as users, can get better results from AI assistants.

Leave a Reply